premium automotive hmi

hyundai × bang & olufsen

audio-first hmi for luxury driving

premium automotive hmi

audio-first hmi for luxury driving

the problem

dense menus & technical controls

focus on playback, not feeling

no adaptation to context

siloed media & sound controls

current state

Fragmented controls, technical tuning interfaces, and deep menu hierarchies force drivers to trade sound quality for attention and safety.

the solution

beosonic → beoscapes → mood radio in one seamless experience

key screens

Transforming technical sound control into an emotional tuning experience.

Why emotional descriptors over EQ? Traditional EQ requires understanding frequency bands, predicting perception changes, and micro-adjustments — creating high cognitive load and distraction risk while driving.

Research insight: Users describe sound using emotional language ("warm," "energetic") not technical terms. We translated acoustic parameters into human emotional descriptors.

Shift: Technical control → Emotional intent

Emotional tuning interface: The circular sound field acts as a continuous emotional tuning surface. Moving the control node shifts bass depth, mid-range presence, treble clarity, and spatial balance simultaneously. Humans think in emotions, not frequencies.

Preset quick access: One-tap sound profiles (Podcast, Music, Movie, Party, Custom) reduce interaction time in high-focus driving. Exploration mode uses the wheel; execution mode uses shortcuts.

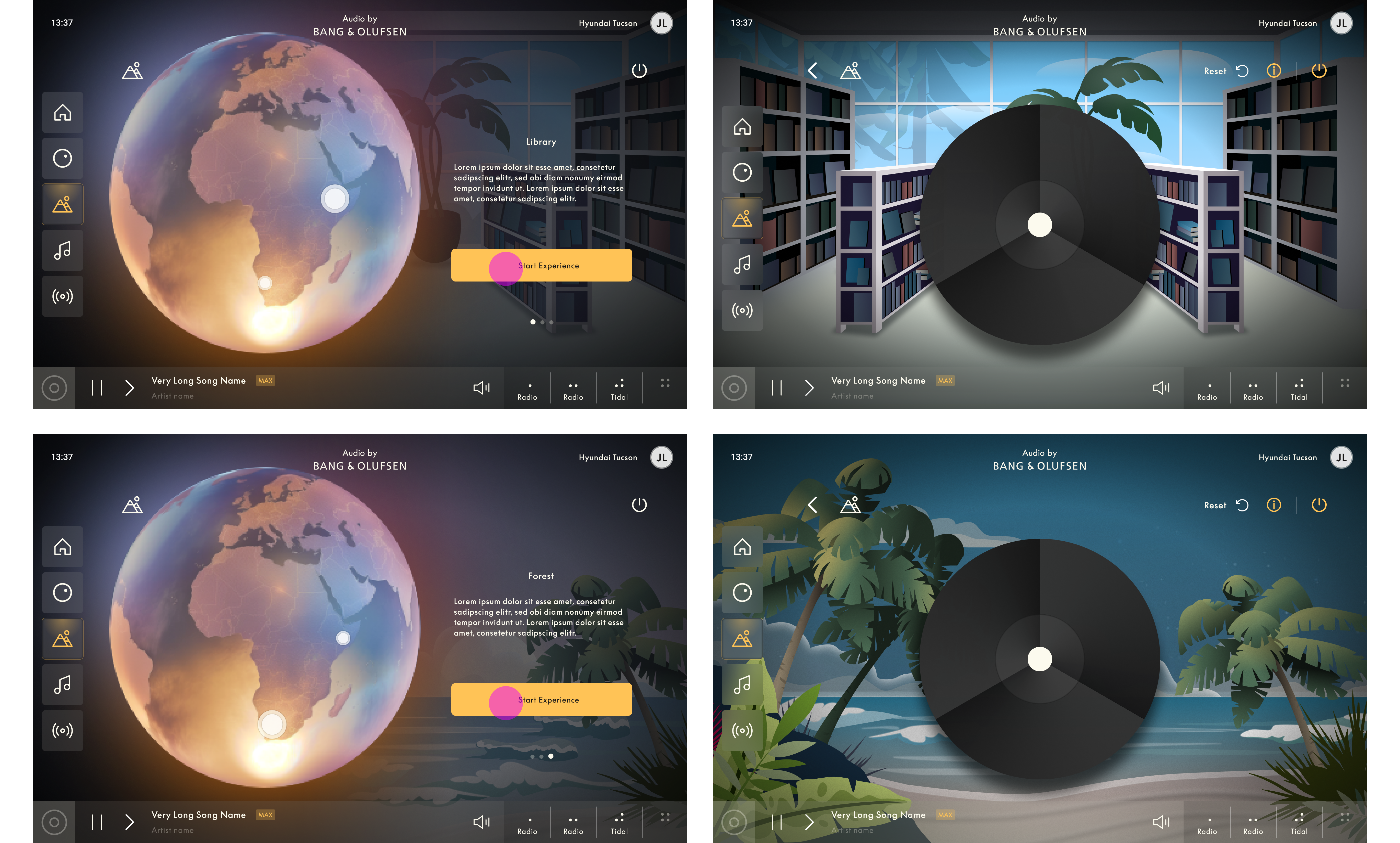

Transforming in-car sound into spatial, emotional environments. Users enter an atmosphere — turning the cabin into an immersive audio-visual space.

Why 3D spatial soundscapes? Traditional stereo limits emotional depth. 3D spatial sound creates depth, width, and movement — increasing engagement and reducing auditory fatigue over long drives.

Visual + Audio immersion: When visual cues reinforce audio direction and texture, the brain forms a single immersive perception. Beoscapes synchronizes spatial audio, dynamic lighting, and ambient motion.

Best contexts: Highway cruising, night driving, long journeys, scenic drives, solo retreats. Avoids high-alert conditions.

Visual environment selector: Globe-based exploration lets users discover soundscapes as places (Library, Forest, Beach, Lounge). Spatial metaphor enables faster understanding — users discover environments naturally without menus.

Spatial audio controls: The control disc positions sound within the cabin. Central = balanced immersion, Forward = focused listening, Surround = environmental depth. Direct manipulation beats abstract configuration.

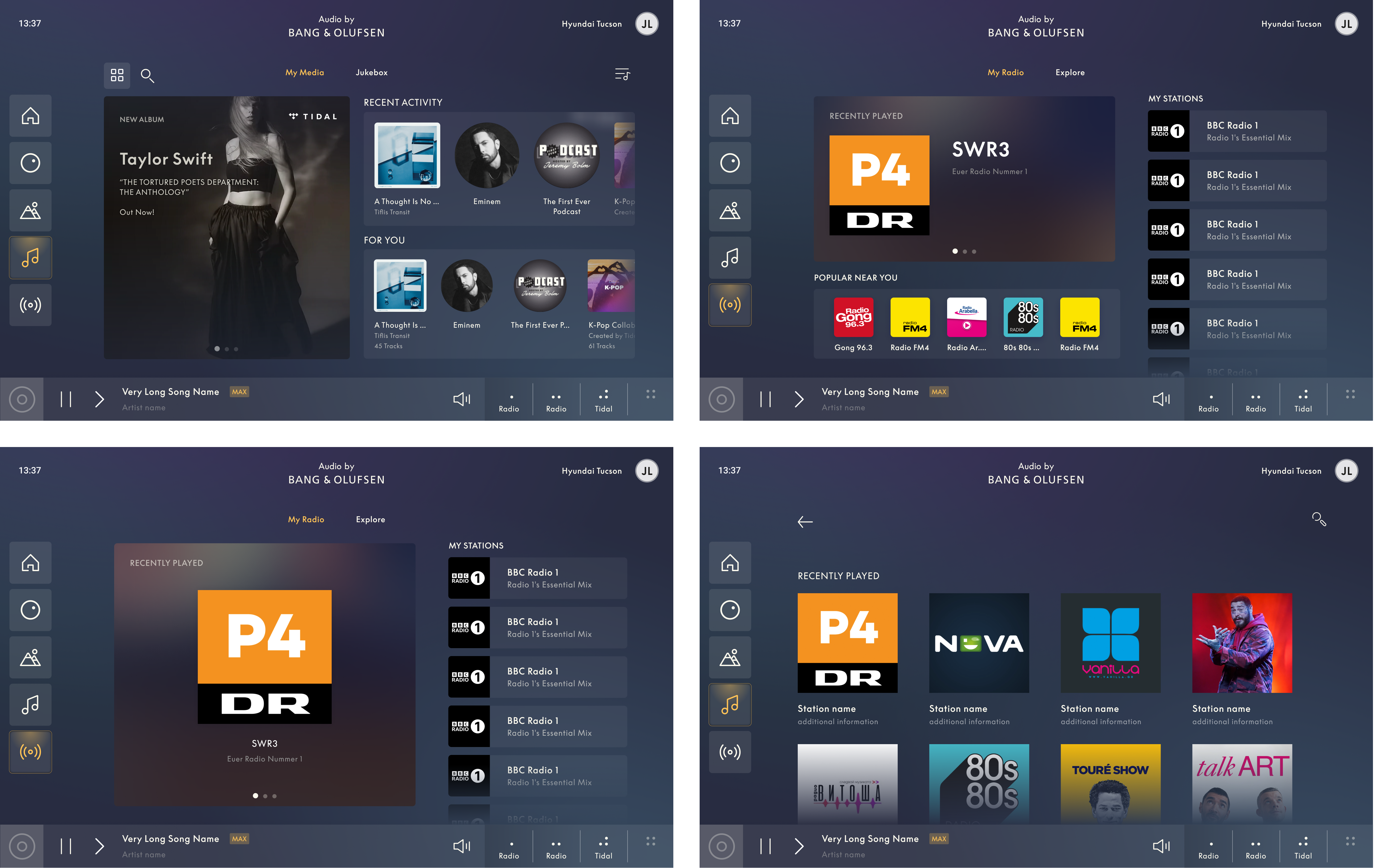

Delivering the right sound for the right moment, without friction. Instead of choosing stations by frequency, users choose how they want to feel.

Why mood-based over frequency? Drivers often don't know what they want. Choice overload leads to indecision, scanning fatigue, and longer eyes-off-road time. Users describe intent emotionally: "I want something relaxing" — not "Tune to 98.5 FM."

Reducing decision fatigue: Mood Radio collapses hundreds of stations into a small set of emotional choices. Recognition over recall — users recognize a mood instead of remembering station names.

Contextual signals: Time of day, driving conditions, listening history, trip duration, and vehicle state surface situationally relevant content.

Mood selector: Quick emotional entry points (Relax, Focus, Party, Sleep, Energy, Comfort). Large tappable targets reduce glance time. Drivers select audio intent in under one second.

Content recommendations: System curates live radio, talk shows, music channels based on personal taste, local popularity, and context. Let the system think so the user doesn't have to.

showcase

design decisions

feel-based controls, no technical knowledge needed

context-aware sound that matches the journey

reduced decision fatigue while driving

impact

in automotive ux, true innovation comes not from adding features, but from removing friction while amplifying emotional experience.

continue exploring